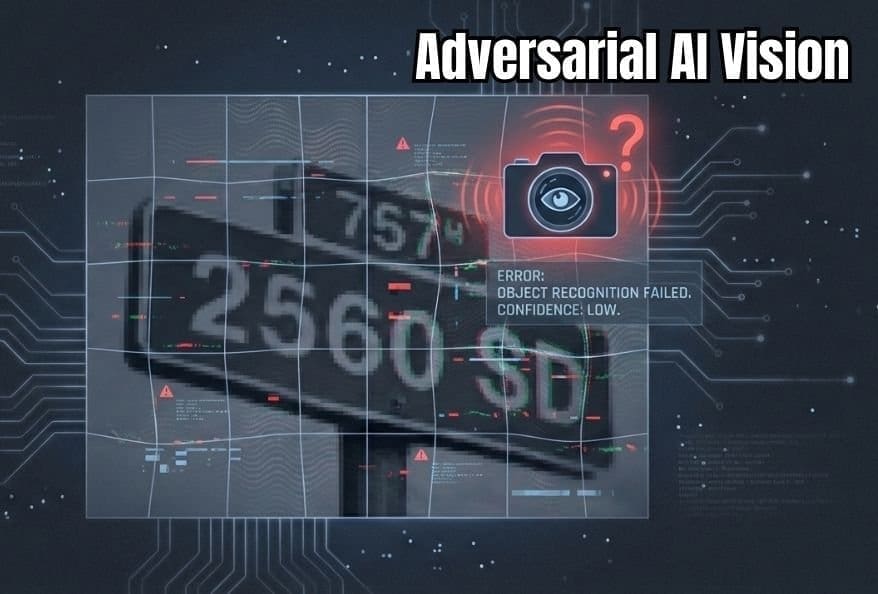

AI Adversarial Images Show Vision System Failures

A New Way to Test Artificial Intelligence Vision Using AI Adversarial Images

Today, AI adversarial images play an important role in understanding how artificial intelligence interprets visual data. AI vision systems help sort photos, scan documents, and guide machines. In most cases, they perform accurately. However, researchers also know that these systems can fail in unexpected ways.

In many situations, adversarial examples rely on tiny visual changes that confuse AI models. Humans usually overlook these changes, while machines react strongly to them. Because of this difference, scientists use adversarial visual examples to study hidden weaknesses in AI vision.

Recently, an open-access study from Doshisha University in Japan explored how to create more realistic adversarial image techniques. The research focuses on improving how these deceptive visuals are designed and tested. As a result, the findings may lead to safer and more reliable AI systems.

Why Adversarial Examples Matter for AI Systems

Early AI developers focused mainly on accuracy. Over time, they discovered that even highly accurate models could make serious mistakes. An image may look completely normal, yet an AI system classifies it incorrectly.

These failures often appear when models encounter machine-confusing images. Such adversarial examples contain subtle noise that alters how AI processes visual information. While people rarely notice these changes, AI systems respond differently.

The risks are real. Adversarial visual examples can cause self-driving cars to misread road signs, medical tools to misclassify scans, or security systems to overlook threats. This is why stronger testing methods are now essential.

Challenges With Traditional Adversarial Image Testing

Older testing approaches usually added random noise to images. Although effective at times, this noise often looked unnatural.

Real-world images follow specific visual patterns. Traditional adversarial image techniques frequently broke those patterns, making the attacks easier for defensive systems to detect and block.

As AI becomes more advanced, researchers need deceptive visual inputs that blend naturally into images. Realistic adversarial images help create tougher and more meaningful tests for modern AI models.

A New Method for Creating Adversarial Visual Examples

To solve this problem, researchers developed a technique called Input-Frequency Adaptive Adversarial Perturbation (IFAP).

This approach examines how visual information is distributed across an image. Some areas contain smooth color transitions, while others include sharp edges and fine details. IFAP adjusts adversarial noise to match these frequency patterns.

By doing so, the resulting AI adversarial images look natural to human observers. However, AI systems struggle significantly more when tested with these carefully crafted adversarial inputs.

How IFAP Improves Adversarial Image Testing

Think of an image like music, with low and high tones working together. IFAP analyses this full visual spectrum before shaping the adversarial noise.

The outcome is a more realistic form of adversarial visual examples. People see little or no difference, but AI models misinterpret the images more often.

Tests on handwritten digits and real-world photographs showed that IFAP consistently outperformed older methods. Under adversarial testing conditions, AI systems failed more reliably, revealing deeper weaknesses.

Why Adversarial Image Research Is Important Today

AI vision tools are now part of everyday life. Online platforms rely on them to organize photos, while industries use them in factories and imaging systems.

Because of this widespread use, flaws exposed by AI adversarial images affect everyone. Improved testing methods help developers identify problems before systems are deployed in critical environments.

This research also supports transparency. The open-access nature of the study allows other scientists to verify and build upon the findings, strengthening AI safety research worldwide.

Conclusion: Strengthening AI Vision Through Adversarial Images

In summary, this study introduces a more realistic way to test AI vision using AI adversarial images. By adapting noise to natural image frequencies, IFAP creates adversarial examples that are harder for AI to handle.

As a result, AI systems face more challenging evaluations, leading to safer and more dependable visual technologies. Even small visual changes can reveal big insights into how artificial intelligence truly sees the world.

Additionally, to stay updated with the latest developments in STEM research, visit ENTECH Online. Basically, this is our digital magazine for science, technology, engineering, and mathematics. Further, at ENTECH Online, you’ll find a wealth of information.

Reference:

- Okuda, M., & Yoshida, M. (2025). IFAP: Input-Frequency adaptive adversarial perturbation via full-spectrum envelope constraint for spectral fidelity. IEEE Access. https://doi.org/10.1109/ACCESS.2025.3648201