AI Vision Cars: Real-Time 3D Perception Explained

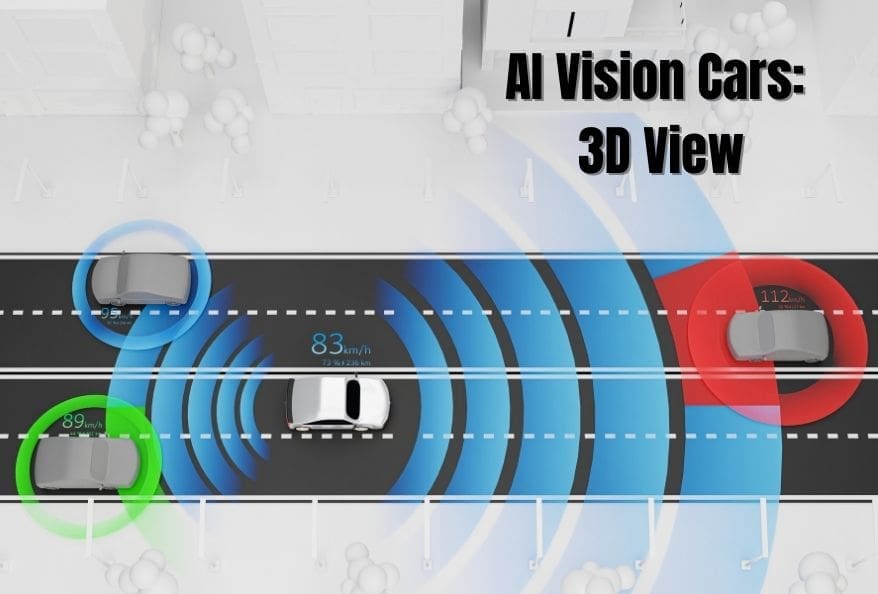

AI vision cars are transforming how autonomous vehicles perceive the world. Have you ever imagined a car that can see better than a human? For self-driving technology, instant and accurate perception is not a luxury—it is a necessity. A recent breakthrough in artificial intelligence marks a major leap in real-time 3D scene understanding, allowing cars to judge distance precisely using compact, energy-efficient hardware.

As a result, this innovation could significantly improve road safety and accelerate the adoption of fully autonomous vehicles.

The Big Problem with AI vision in vehicles

To begin with, AI-based vehicle vision must constantly calculate how far away objects are. Traditional camera systems often struggle with accurate depth estimation, especially at highway speeds. Humans, however, solve this problem naturally using two eyes through stereo vision.

However, replicating this ability in machines has been computationally expensive. In the past, high accuracy required large, power-hungry computers that introduced latency. Even worse, small delays can become dangerous on fast-moving roads.

For example, driving with lagged perception is like playing a racing game with a delayed screen—you crash instantly. Therefore, real-world vehicles cannot tolerate such delays.

How AI-based vehicle vision Learn Human-Like Perception

To solve this challenge, researchers developed a Transformer-based neural network inspired by how the human brain processes visual data. Their system, called Foundation Stereo, is specifically optimized for embedded systems used in AI vision vehicles.

In addition, the team deployed the model on the NVIDIA Jetson AGX Orin, a compact processor designed for robotics and edge AI. Their objective was clear: deliver near–desktop-level vision performance in hardware small enough for consumer vehicles.

Real-World Testing on Traffic Data

To validate the system, researchers tested it using the well-known KITTI dataset, which includes thousands of real-world traffic scenes. Consequently, they were able to accurately measure both speed and precision.

The results were impressive. The embedded system generated detailed 3D depth maps in milliseconds—fast enough for highway driving. Moreover, error rates remained low, and the model consistently detected vehicles, pedestrians, and road structures.

Overall, this represents a major milestone for real-time perception in AI vision vehicles.

Balancing Speed and Detail in Vehicle Perception

Although desktop GPUs remain faster, the performance gap is shrinking. Notably, the embedded system achieved over 26 frames per second, which is the standard for smooth, real-time video.

Previously, older depth-estimation methods produced slow and unstable results. In contrast, this system delivers continuous and stable perception. As a result, AI-based vehicle vision can plan safer driving paths with greater confidence.

Additionally, the system creates dense 3D point clouds, where each point represents a physical object. Denser point clouds directly improve navigation accuracy and passenger safety.

Why AI Vision Cars Matter

At present, vision reliability remains one of the biggest barriers to fully autonomous driving. Fortunately, this research brings the industry much closer to overcoming that challenge.

Furthermore, the study introduces cooperative perception. For instance, one car can detect a hazard and instantly share that information with other vehicles. Consequently, AI vision technology can collectively improve road awareness.

Importantly, most processing happens directly inside the vehicle using edge computing. This reduces cloud dependency, lowers latency, and saves bandwidth.

Building Digital Twins With AI Vision Cars

With this technology, AI vision cars act as mobile scanners that continuously update digital maps. Static infrastructure is recorded, while moving objects are filtered out, resulting in clean and accurate city models.

As a result, cities can detect road damage earlier, plan repairs faster, and improve overall urban safety.

Optimizing AI Vision Cars for Efficiency

To further enhance performance, researchers used TensorRT to optimize the AI model. This reduced computational load and improved inference speed.

After testing multiple configurations, they found that a 256 × 256 pixel resolution provided the best balance between speed and detail. Equally important, the system consumes far less power – an essential advantage for electric AI vision cars.

Cameras vs LiDAR in AI Vision Cars

Traditionally, autonomous vehicles rely on LiDAR sensors, which are accurate but expensive. Cameras, on the other hand, are affordable but less precise.

However, this AI-driven approach allows AI vision cars to achieve LiDAR-like depth perception using cameras alone. As a result, advanced safety features become more affordable and accessible.

What’s Next for AI Vision Cars

Looking ahead, researchers plan to test higher resolutions and challenging conditions such as rain, fog, and low light. As embedded processors improve, AI vision cars will gain even clearer and faster perception.

Eventually, these systems will adapt to all weather conditions, enabling safe autonomous driving year-round.

Final Thoughts on AI Vision Vehicles

In conclusion, this research represents a major breakthrough for AI vision cars. It proves that advanced AI models can run efficiently on small, low-power hardware without sacrificing accuracy.

In the near future, cars will operate as real-time 3D supercomputers—sharing safety data, updating digital maps, and reacting instantly to their surroundings. Ultimately, AI vision cars will help create safer roads and smarter cities for everyone.

Additionally, to stay updated with the latest developments in STEM research, visit ENTECH Online. Basically, this is our digital magazine for science, technology, engineering, and mathematics. Further, at ENTECH Online, you’ll find a wealth of information.

Reference:

- Simeonov, M., Dado, M., & Kurdiumov, A. (2025). Real-time 3D scene understanding for road safety: Depth estimation and object detection for autonomous vehicle awareness. Vehicles, 8(2), 28. https://doi.org/10.3390/vehicles8020028